[This is one of the finalists in the 2022 book review contest. It’s not by me - it’s by an ACX reader who will remain anonymous until after voting is done, to prevent their identity from influencing your decisions. I’ll be posting about one of these a week for several months. When you’ve read them all, I’ll ask you to vote for a favorite, so remember which ones you liked - SA]

Imagine that there was a generally acknowledged test for artificial intelligence, to find out whether a computer program is truly intelligent. And imagine that a computer program passed this test for the first time. How would you feel about it?

The most likely answer is: disappointed.

We know this because it happened several times. The first time was in 1966, when ELIZA passed the Turing test. ELIZA was a chatbot who could fool some people to believe that they talk with a real human. Before ELIZA, people assumed that only an intelligent machine could do that, but it just turned out that it is really easy to fool others. Other tests for intelligence were playing chess, playing a whole variety of games, or recognizing cat images. Machines can do all this by now, and this is awesome. And yet, every success sparked new disappointment, because we didn't find any magic ingredient, some quality that would make a difference between intelligent and non-intelligent. When the groundbreaking GPT-3 and DALL-E suddenly could write news articles or poetry, or could dream up snails made of harp... the main improvement was that they used more raw computation power than the previous versions.

If you find this disappointing, then you will also be disappointed by "Consciousness and the Brain" by Stanislas Dehaene. The book is the condensed wisdom of three decades of cognitive research, and it tells you what consciousness is, how it operates, and why we have it. The book actually answers these questions. But if you were hoping that the book would Resolve Philosophy, tell you What Makes Humankind Unique, or whether Free Will exists, it doesn't do that.

It only tells you what consciousness is.

Consciousness: What is it Not?

In order to study consciousness, we first need to recognize it. Dehaene's approach to this is simple and bold: a perception or a thought is conscious if you can report on it. If a test subject sees the word "range" on a screen, and you ask her to report what word she has seen, then the answer might be

1) "I have seen the word range", or

2) "What word? There was no word!?"

In the first case, she has seen the word consciously, in the second not. This approach is more radical than it seems. Researchers are very cautious to take introspection at face value. And rightfully so, since our introspection is often wrong. The beauty of Dehaene's approach is that he only extracts a single bit of information (yes or no). It turns out that there are experiments which differ only in a minimal aspect (e.g., a slightly longer or shorter time delay), and which trigger either 1) or 2) reliably across many trials and many test subjects. I'll describe a few such setups later, but the point is that you can trust the reports because different people consistently give the same answer in the same situation.

It is important to understand that Dehaene's book is only about this definition of consciousness. It is not about cognition (in the sense of abstract reasoning) or meta-cognition (the ability to reflect about your own thoughts). It is not about self-consciousness (being aware of oneself). And of course, there are other definitions of consciousness. The most compelling alternative calls Dehaene's concept conscious access and distinguishes it from conscious perception. But for this review, I will stick with Dehaene's definition.

Another different, though related concept is attention. For Dehaene, attention is the gating mechanism that decides which information is allowed to enter consciousness. But this process itself is unconscious: we are not aware of all the options that were considered and discarded, only of the winner. This notion of attention is broadly in line with the mainstream of the field, though the exact definition varies.

Controlling Consciousness

To understand consciousness, the first step is to understand how unconscious processing works. Some glimpses come from blindsight patients, who lose the ability to see consciously due to brain damage. This can affect their whole field of vision, or just one hemisphere, or even just specific forms like lines. But they remain able to unconsciously process what they see. They will automatically walk around objects in their way, even though they swear that they don't see them. The effect can also be artificially produced in monkeys.

Fortunately, there are also ways to make perception unconscious in ordinary people:

- You don't always perceive images consciously if they are presented with a low contrast, or for a short time.

- Binocular rivalry occurs if your two eyes are presented with different images. In this case, most of the time you don't see a weird overlay of the two images, but instead your conscious perception flips between seeing either one or the other. It's a bit like staring at ambiguous images, but more consistent. So at any point in time, you perceive one of the images consciously and the other unconsciously.

- Attentional blinking describes the effect that after a conscious perception, you are consciously blind for anything that you see in the next 200-300ms. Let's say you watch a fast stream of digits, each only visible for 100ms, and occasionally the stream contains a letter instead of a number. You are supposed to detect the letters. When you see an "M", this enters your consciousness, and you detect it. But if 300ms (three images later), there is another letter "S", you will not see it consciously. Actually, you will be sure that there was no "S" in the stream.

- With Transcranial Magnetic Stimulation (TMS) we can stimulate specific brain regions. By stimulating sensory areas, we can induce hallucinations, and we can either make them conscious or unconscious by regulating the strength of TMS. By interfering with the right region at the right time, we can also prevent real perceptions from entering consciousness.

All these setups are useful, but they are not 100% reliable, more like 80% at best. For example, binocular rivalry is different in autistic people. But there is one setup which works always, for everyone, and that is masking. In masking you see some shapes (the "mask"), then very briefly an image, and then the shapes again. If the image is shown for 30ms, then people do not consciously see it, while for 60ms they do. This works with almost 100% accuracy, and has become the main workhorse for consciousness studies. You can even mask only a part of the screen if you want. While subjects are presented with masked words, numbers, and images, the researchers can measure the brain activity with EEG, MEG, fMRI, or even implanted electrodes (for epileptic patients who have the electrodes for unrelated medical reasons). They measure how the skin starts to sweat and the body tenses up from the unconscious perception. And mostly easily, they measure how it affects the performance in a subsequent, conscious task. For example, seeing the word "bank" unconsciously will make you react faster to a related word like "money", an effect known as priming.

Should I Believe This?

Wait a moment, priming? Aren't those the wrong conclusions that were wiped out by the big replication crisis in psychology? Should I really believe these things?

I think yes. It is hard to convey in a review how painstakingly pedantic the field apparently is. There are a lot of different opinions on consciousness, and the experiments are scrupulously examined for any possible flaw or alternative interpretation, both from insiders and outsiders of the field. Just to give one example, in the priming study with "bank" and "money", the conclusion was that the words are processed *semantically*, not just as a bunch of characters. Dehaene mentions not one paper getting this result, but four. Then he describes how critics were not satisfied, and gives five more papers which addressed the critics. But for reasons going deep into the precise setups, it was still not settled whether this actually proves the claim that the meaning is processed. Which made the original authors re-examine their results and publish a follow-up paper proving that indeed, the critics were right, and their setup was no proof. Which triggered an avalanche of follow-up work until it was waterproof beyond doubt. Dehaene cites 44 papers just on this debate. And there was never any doubt on whether the raw results were reproducible, the debate was about whether the experiments left room for alternative interpretations. I wish my own fields of research would be just half as rigorous as what I read in this book.

I have read one other scientific book which breathes the same positive pedantry, and that is Are we Smart Enough to Know How Smart Animals Are? by Frans de Waal. I think there is a pattern. It helps that both fields treat a topic on which everyone has their own opinion, so they get a lot of know-alls from outside the field interfering with the discussion. But more importantly, they are based on methods which used to be tabooed inside their scientific community. De Waal treated his animals as personalities and even bonded with them instead of keeping neutral distance, and he took wildlife observations seriously. All of this was considered totally unscientific, so he was forced to be extra scrupulous in his experiments. For Dehaene and his colleagues, it was the paradigm that “subjective reports can and should be believed” -- as a source of raw data, without making the mistake of conflating the subjective belief with reality. When patients tell you after surgery that they had the impression to leave their body and float at the ceiling, then you should not believe that they actually floated. You should believe that "floating" was their true feeling, and that probably there is a neuroscientific cause for this feeling. Taking it seriously eventually enabled researchers to induce the feeling of leaving your body in any person, by using the right neural stimulation. But until the 90s, it was scientifically taboo to take subjective feelings into account, so experiments with low standards would have been torn apart.

I find these examples encouraging, because they show that even strong taboos can be overcome if your science is just good enough.

Not surprisingly, the replication crisis hit cognitive psychology much less hard than other fields like social sciences. Of nine key findings of cognitive psychology, all nine could be reproduced, including three that are core to the book. Yes, a lot of priming experiments were not replicable, and only a hard core survived. But this book is about the hard core. Of course, some of the ~1300 papers cited by Dehaene will be wrong, but I have a lot of trust in the general picture.

What We Can Do Without Consciousness

Ok, let us believe that the results on unconscious processes are real. What can the brain do with unconscious information?

A lot. The experiments fill a big chunk of the book, and I can't go into detail. I have already mentioned that your unconscious brain does not just process the letters of a word, it also deciphers the meaning. For example, a masked "four" primes you not just for recognizing "four", but also for "4", "FOUR", a spoken "four", and even for "three". In fact, it primes you better for "three" than for "two": the priming is stronger the closer the numbers are.

Unconscious perceptions can induce negative emotions, and you can unconsciously distinguish faces and abstract categories like "object" and "animal". You can unconsciously estimate averages. Unconscious perceptions can control your attention. An unconscious "stop signal" will activate your executive control center in the brain. Sometimes this prevents you from pressing a button that you were supposed to press, which seems like a mysterious mistake to you. You can also unconsciously detect errors: when you make a mistake in an eye-tracking task, your error control system will flash even if the signal never reaches your consciousness (in which case you don't notice that you have made a mistake).

You can even learn new things unconsciously. If you get a reward after each unconscious presentation, and you get more reward after image A than after image B, then you learn that A is worth more -- even if you never consciously see any images at all! For you, it seems like you look at a screen where nothing interesting happens, and you get completely random rewards.

What We Can NOT Do Without Consciousness

So if we can do so many things unconsciously, what do we even need consciousness for? What is the effect of a conscious perception?

Traces of unconscious perceptions are spurious. They are almost completely gone after a second. This means that tasks which require working memory can not be performed unconsciously. For example, in order to compute 12x13 we need to perform several subsequent steps, and use the intermediate result of one step as an input for the next one. This task can not be performed unconsciously. It seems that the unconscious traces are too weak to be used as an input for a second step. Dehaene tries to pinpoint the exact point of complexity that can be performed unconsciously, and it seems to be very low. After seeing a number n unconsciously, test persons are well above chance for the tasks of naming n ("But I haven't seen anything!" "Don't worry, just take a random guess."), naming n+2, or deciding whether n<5. But deciding whether n+2 is smaller than 5 is already at chance level. Dehaene suspects that complex language (conversation with others) also falls into this category of processes that require working memory. It is not mentioned in the book, but the same probably holds for trains of thought, including cognition.

Consciousness has a coordination effect. Imagine you see some line moving in a diagonal direction over the screen. We know very well what happens in the low-level visual areas. The neurons there cannot "see" the whole picture, they have only a limited perceptive field. For them, the image is ambiguous: it is impossible to decide from their local information whether the line moves upwards, or to the right, or diagonally. Some neurons will decide for "upwards", and encode that. Others will decide for "right" or for "diagonally". If the image remains unconscious, then these mismatches are never resolved. However, if the image enters consciousness, then after 120-140 ms all neurons in the lower layers suddenly start to encode "diagonally". Now they agree on the same interpretation of the world.

Dehaene phrases this in a way that ACX readers will love. For him, the unconscious (or pre-conscious) neural activity encodes a probability distribution over the possible states of the world. If we see the word "bank", then the meaning "credit institute" and "sitting bench" are both represented by some neurons, so they both occur in this probability distribution. When the word reaches consciousness, then the brain *samples* from this distribution. So it decides for one of the possible options, and all neurons are overwritten with this meaning. For example, in binocular rivalry (when your two eyes see incompatible images) you will sometimes see option A, sometimes option B, but usually not both. Once you have drawn a sample, this is not a final decision. In binocular rivalry, your perception switches every few seconds between A and B. Some researchers even claim that the brain gets the Bayesian math right: if you present an image that is ambiguous in a very clever way, such that there is an objective underlying probability of 70% for A and 30% for B, then you will see A for 70% of the time and B for 30% of the time. Others claim that you can play "Wisdom of the Crowd" in single-player modus. Say you want to know the weight of a cow. Then take a guess. Now throw your guess out of the window, and take another guess. Finally, compute the average of your two guesses. The claim is that this average is better than your individual guesses.

Such sampling has obvious benefits. If I have to walk around a tree, then choosing either "left" or "right" is fine, but my whole brain and body should stick to this decision. So conscious sampling is very important for decision-making. But it goes beyond that. The way how we perceive the world in a conscious moment is already a selection, as the rotating mask illusion nicely illustrates.

There is another interesting effect of consciousness that Dehaene only mentions casually a few times: it enables us to gauge our confidence. So test subjects can say "I am certain" or "this was just a guess". We can test these self-assessments with betting systems. And we are good at this, but only if the task was based on conscious perception. If it is based on unconscious perception, then we may still be good at the task itself, but our self-assessment is inaccurate. Often we underestimate our performance. By the way, simple betting systems (consisting essentially of the options "Yes", "No" and "Not Sure") also show that mice, monkeys and dolphins know how confident they are. We'll have to wait a bit for results on how consciousness affects self-assessment in animals, but researchers have speculated that the results should be similar, suggesting that a prediction market of unconscious mice may work poorly.

Consciousness and Learning

In this section, I will go a bit beyond the book, so this might be a bit less reliable than the rest. I have worked on research of neural plasticity and learning, so the ideas do not come entirely out of thin air. To understand the effect of consciousness on learning, you should first know that there are two different types of long-term memory, procedural and episodic memory. Procedural memory allows us to walk, ride a bike, or play the piano. For this review, I will pool it together with semantic memory: the knowledge that birds can fly, or things that you have learned by heart, like a song or the multiplication table. We can use procedural memory in automatic mode, and we can acquire and access it unconsciously. Acquiring procedural memory often requires a lot of practice and repetition. This type of learning is usually slow and decentral in the brain: only the regions which are directly relevant to the task are involved in this type of learning. It shares many similarities with modern machine learning systems.

On the other hand, episodic memory refers to specific moments of your life, for example remembering what you had for breakfast or what your conversation partner said five minutes ago. This memory is one-shot, so the memory is instantaneously formed and does not require any repetition. Aspects of episodic memory can be transferred into procedural memory: when you learn the name of your new colleague, then this stays an episodic memory for a while, but it becomes procedural/semantic memory after a few repetitions. Sometimes we don't even need repetition, for example when we recognize a face after a single meeting, or when a child acquires new words. But this relies on complicated interaction between the two types of memory that I won't get into here. Usually, we can make a clear distinction. It seems that episodic memory without consciousness is plainly impossible, and I will come back to that.

But consciousness also helps for procedural memory, even though it can be formed from purely unconscious perceptions. First, think of consciousness as giving a boost to the learning rate. I have mentioned before that episodic memory (which requires consciousness) can be transferred into procedural memory during sleep, and this is stronger than the effect of the direct experience alone. You will still not be able to learn the piano in a week, but consciousness probably makes learning faster. Second, as Dehaene explains in his book, you only connect unconscious events with each other if they are simultaneous. This makes sense because unconscious neural activity fades away so quickly. Dehaene describes some classical conditioning experiments similar to Pawlow's dog, where the sound of a bell is associated with receiving food. But if (to stay in the picture) the bell is perceived unconsciously, then the connection is only made if the bell rings while the reward comes (plus/minus at most one second), not if the bell rings a few seconds earlier.

For episodic memory, I think that it is even more closely entangled with consciousness. I believe that the coherent activity of consciousness is exactly the form of neural patterns that can be stored in episodic memory. Thus a conscious perception creates a memory item that can be stored and retrieved later. Let me explain why. A crucial property of memory is pattern completion: that you can retrieve the whole memory pattern from activation of a small part. So the word "croissant" may trigger the image of a croissant, the smell of it, the taste, the texture, and so on. But the same works vice versa. The smell of a croissant also triggers the rest. There is no core of the concept "croissant" that needs to be present in order to trigger the whole concept. For procedural memory, this is not a big deal. Since this memory is formed slowly, there is enough time to nicely embed it into existing structures. But episodic memory is one-shot learning, it needs to be formed in a second. (There is some long-term consolidation later during sleep, but this only shifts the problem: the brain still needs to maintain traces of the pattern for 16 hours.) And it's really hard to embed a complex concept so deep into an existing network that you still have pattern completion. Mind that every change in the brain needs to be cautious, because you have a lot of systems that should still be working after the change. In machine learning, in case of doubt you set your learning rates really low, and that is for the same reason: you don't want to overwrite all the existing functions of your network. And the task is more difficult for more complex patterns. If each neuron represented its own interpretation of reality, then it would be pretty hopeless to encode all this into a retrievable pattern in just one second. But it gets a lot easier if the activity of all neurons throughout your whole brain are highly consistent with each other, and that is precisely what consciousness seems to achieve. This is why I believe that consciousness might create the items that we can store in our episodic memory.

Properties of Consciousness

For an unconscious perception, the main brain activity is in perceptual regions (visual regions for images, auditory regions for sounds, and so on). The signal can travel further into the brain, but only reaches a few regions, and gets weaker and weaker. A conscious perception does not get weaker, it gets stronger as it travels. Dehaene compares unconscious perception with a wave that runs out at the shore, while a conscious perception is like an avalanche that gains momentum. After 400ms, the conscious avalanche has activated large parts of the brain, which Dehaene calls global ignition. Moreover, all the brain parts synchronize, and information flows from all parts into each other. This is not a metaphor, "flow of information" is a well-defined quantity, measured by so-called Granger causality. Granger causality is a useful concept with a terrible name, since it is about a complex form of correlation, and not about causality. Whatever it is, it suggests strongly (without being a proof) that all parts of the brain talk intensively with each other.

Dehaene goes into a lot of details on the exact form of these signals and exchange, but I will cut it short. It all fits very well with the assumption that in a conscious moment, the brain creates a coherent worldview, which we may call "sampling", "coordination", or "creating a memory item". Dehaene compares it with a memo that the CIA prepares for the president: it does not contain all the details of hundreds of subreports, but is a highly condensed summary of the worldview of the CIA. This metaphor expresses nicely how the information is compressed, but mind that it leaves out two aspects. In a conscious moment, information also flows downwards to the low-level areas, and they change their activation patterns to make them compatible. So it is as if all CIA members would read the presidential memo, and start to rewrite their own local reports to match this worldview. (I hope that the CIA doesn't work this way.) Second, in the brain there is no single president to make decisions. There are regions that we call "executive control regions", but they are notoriously hard to pinpoint, and there is no region which is always involved in decisions. It might be more accurate to think of a committee meeting of all brain regions, who discuss and decide on a topic.

Consciousness has a severe disadvantage: it is really slow. A conscious perception takes about half a second, 500ms. This is ages compared to neural transmission speed. If you are shown two images, and you are supposed to pick out the one with the animal, then your eyes start moving to the correct image after 70-100ms. Not with 100% accuracy, but far above chance. In a 100m sprint, you really don't want to wait for 500ms before you start running. Or imagine playing computer games with 2 frames per second, which is NOT FUNNY. So a lot of our everyday life runs on autopilot. This is also the reason why predictions are so important for the brain. Some cool experiments show that when we are shown a surprising image, the time we believe the image to appear is 300ms after it actually appears. But if we can predict the image, there is no such delay, and we perceive the timing correctly.

Consciousness is also exclusive. While a conscious perception is processed (which may consist of several components, like an image and a compatible sound), the activity blocks off other perceptions. They are either missed completely, or can only be processed after the 500ms are over. There is something like a buffer in which a perception can be stored until consciousness is ready again, but it will not be processed before that. (Again, there are cool time perception experiments which show that quite convincingly.) So our consciousness runs at most at 2 perceptions per second, and other than unconscious operations, it can not be parallelized.

Insights Into the Inside

Now that we understand consciousness, we have quite a lot of tests to tell apart conscious from unconscious processing. Indirect clues come from abilities. It seems that working memory requires consciousness, and Dehaene has developed some very detailed tests to decide whether someone is conscious. Not just for fun; he works with patients in long-time coma (more precisely, in vegetative state). Some of them are fully conscious locked-in patients, but this is quite hard to detect. Dehaene has developed tools for detecting traces of consciousness in such patients, and they can predict (to some extent) whether the patients will eventually recover. They are also useful for developing communication devices for locked-in patients. But we can also apply the tests to healthy individuals and see what we get. We are not conscious during anesthesia (what a surprise), but sleeping is already more complicated. Most sleep phases are unconscious. In dream phases (REM sleep), external stimulation usually does not spark consciousness. However, the brain does react like a conscious brain if the stimulus is directly implanted into the brain via magnetic stimulation (TMS). So perhaps we are conscious in dreams, and we are only cut off from outside perception. But it is too early to be certain. Also, although the book does not discuss this point, I wonder whether we are conscious all of our daytime. Probably we don't use the full bandwidth of two conscious perceptions per second the whole day. How conscious are we when we enter the "flow" during a marathon? Can meditation suppress consciousness? I don't know.

A part that really made me think is Dehaene's theory of schizophrenia. I have heard a lot of explanations for schizophrenia, and most of them sound superficially compelling, but collapse pretty quickly when you dig into them. This one... has not collapsed yet, at least not for me. Deahene believes that schizophrenia is what you get if you (partially) lose conscious perceptions. This sounds ridiculous at first, but as always, Dehaene makes it really hard to shrug this off as obvious nonsense. He claims that in order to reach consciousness, a masked signal needs to last "much longer" for schizophrenic patients. I expected that "much longer" means "whatever passes your p-value test", but I looked into the study. It was 90ms versus 59ms. That’s a lot. The maximum of the control group (n=28) was roughly the same as the average of schizophrenic patients (n=28)! And of course, it doesn't stop there. Also for other effects of consciousness, schizophrenics really stick out. (The link is worth clicking!) Dehaene describes how the other neural signatures of consciousness are disturbed or even plainly missing. He describes how schizophrenia can be caused by certain neuro-anatomic damage (in terms of regions and neuron types), which happen to be exactly the type of damage that would impair consciousness. He tells compelling stories on how consciousness would explain the positive and the negative symptoms of schizophrenia. He mentions some weird autoimmune disease which gives you super-strong schizophrenic effects (from hallucinations to paranoia) until you spontaneously lose your consciousness after three weeks and possibly never regain it. I am still cautious about the story, simply because I have a really low prior that we ever find a theory of schizophrenia. But if you hope for such a theory, you should know this candidate.

Conclusion

So consciousness has upsides and downsides. It is really slow, it is exclusive, and it simplifies the world into a highly compressed sample. This can be useful in its own right, for example to make a decision. A lot of information is lost in this process, but apparently the resulting pattern is so simple that it can be processed further. Since all parts of the brain participate in a conscious event, it is also universally available in the brain. Dehaene calls this function the *Global Neuronal Workspace*. Propagating something to consciousness is similar to loading something into a register of a computer, so that it can be processed further. I believe that consciousness is even more, it creates the item in the first place. The item can then be stored and retrieved, and it can be used as input for mental algorithms. Of course, a conscious thought does not need to be triggered from the outside. It can also be the next step of a mental algorithm, like the next thought in a train of thoughts, and it can come (fully or partially) from episodic memory or mental associations. Dehaene adds that due to its simplicity, these memory items can often be expressed in language, and thus they can be transmitted to others. Thus consciousness is probably a necessary factor for the complex language and culture of humans. Necessary, but not sufficient: many animals around us are conscious, too. Humans are special in many ways, but being conscious is not among them.

After reading this long review, does it still make sense to read the book? Yes, absolutely! It describes tons of really fascinating experiments that I had to skip, and it is really pleasant to read. As are other books by Dehaene, especially "Reading in the Brain" and "The Number Sense", which discuss how the brain processes texts and numbers.

Appendix A: The Hard Problem of Consciousness

Do you feel disappointed by the book? At least some people did. When the program ELIZA passed the Turing test, a common reaction was “This is not what we had meant”. Some reactions to this book were similar. Dehaene’s concept of consciousness is much more mundane than the lofty associations that we commonly attach to the word consciousness. But if we actually want to nail it down and get a more substantial definition than “whatever elevates me above mere animal”, then “the thing that happens during conscious perceptions and does not happen during unconscious perceptions” sounds pretty convincing to me.

But other people think differently. Dehaene was accused of dodging the hard problem of consciousness. This is "the problem of explaining why and how we have qualia or phenomenal experiences. That is to say, it is the problem of why we have personal, first-person experiences, often described as experiences that feel "like something"" (wikipedia link above). Dehaene has been criticized for "dismissing the hard problem in barely over a page of text". For Dehaene, as for other renowned researchers, the problem does not exist, and I share their opinion. But there are equally renowned researchers who accept the problem. Even though the book hardly discusses the topic, I believe that it has implications for the question, and I will explain my point of view below. If you believe in the hard problem of consciousness, then my perspective will probably not convince you otherwise, but it may help to pinpoint further where exactly we disagree.

A sensory input like the color "red" leads to some neural activity. While the neural activity is active, there is some perception of it. There are at least two different ways of phrasing what happens.

- Classically, we would say that "I" or "the brain" experiences the color red. This is also called a quale, plural qualia. The hard problem of consciousness assumes that this experience is something that goes beyond neural activity. So even if an outsider perfectly knows the neural activity, then this does not give experience. A crucial point of this perspective is that there is an observer, which is usually called "I", and sometimes "my mind" or "my brain".

- In the book Consciousness Explained, Daniel Dennett argues that a more appropriate description is the multiple drafts model (also called Cartesian theater): the brain is a collection of many different regions, all of which follow their natural task of observing the surrounding neural activity. Each region acts like a little agent with its own perception. The experience is then just the collection of these local experiences.

Critics like John Searle find that the multiple drafts model can't explain why experiences are first-person, i.e., where the "I" comes from. And I think this is precisely the point that Dehaenes's book helps us to understand. My claim is:

Given what we know about consciousness, if we assume the multiple drafts model, then we should naturally expect the subjective perception of a first-person observer.

To explain the claim as clearly as possible, let me take a small detour.

The brain is very good at decomposing the world into units that make sense. For example, when I look out of the window, I automatically decompose the image into several houses, a few people, a dog, and so on. The units can be nested, like a window that is part of a house, and can even overlap. In general, the brain tries to find neat and clean units if possible. An example is our own body. We have a very clear body schema, i.e., we consider our own body as a unit (with various sub-units, of course). My hand is part of my body, the cup of tea in my hand is not. And that makes a lot of sense. On the other hand, qualitatively the difference is not so fundamental. Both the hand and the cup are objects outside of your brain. We can control the hand by sending signals to our joints, and we can control the cup by grasping it, which is also achieved by sending signals to our joints. So it is the same sort of control on a fundamental level. But on any practical level, the difference is huge. Even an alien observer would agree that the decomposition into "your body" versus "not your body" is sensible. However, simple tricks like the rubber hand illusion show that this body schema can be re-learned. So the body schema is a construct that is shared by almost all people (some get it wrong), because it makes so much sense, both to us and to alien observers.

What would an alien observer say about the neural activity in our brain? There are a lot of different brain areas with different functional rules. Sometimes a few areas interact with each other, and our visual system may activate our motor control system without much other brain areas involved. A lot of neurons plainly contradict each other. Perhaps the alien would conclude that the subunits have a lot more descriptive power than just the coarse category "brain". So it might decide to describe it as Cartesian theater. But now imagine that the alien is only allowed to observe the brain *in conscious moments*. As we have learned, in these moments all regions of the brain have agreed on the same interpretation of the world. In the theater picture, the alien would only observe the actors at times when they all speak in a perfect chorus. In this case, it might conclude that the sub-units are not so important, and that the chorus is much more important than its part.

I think this is precisely where our concept of "my mind" comes from. Remember that our episodic memory might be exclusively formed from conscious moments, and also implicit learning gets a strong boost from consciousness. So when "we" (our brain, or the actors in the Cartesian theater) learn a "mind schema", then this is based on the conscious moments, not on the activities in between. On this basis, it makes sense to merge all our neural activity into a single unit, which we call "I" or "my mind". Just as we form the concept of "my body", but even stronger, since we never "observe" different parts of our mind to be incoherent or even independent. Once the concept of "I" is formed, any conscious perception is connected with the "I" unit, so a conscious perception of the color red is translated into "The neural activity of the myself-unit represents the color red", which in common terms is "I experience the color red".

Appendix B: Are Robots Conscious?

Are babies, animals, or robots conscious? For babies, yes, they are conscious. Their consciousness is 3 times slower than that of adults, which probably has purely physical reasons. The cables in the baby brain are not isolated. The isolation just doesn't fit into the baby skull. Unisolated fibers have lower transmission speed. The isolation is added later in several surges, the last and most drastic of which happens during puberty. Be patient with your babies and kids, and yes, even with your teens.

A lot of animals are conscious, too. For mammals, it looks like a universal Yes. We have pretty clear evidence of consciousness from apes, monkeys, dolphins, rats and mice, some of which came after Dehaene's book. The question becomes trickier as animals become more different from humans, since the brains become more different, and we are more and more forced to rely on indirect clues. For an octopus, where most of its neural power is dispersed over its arms, the behavior suggests that the answer is still yes, but neural signatures can no longer be taken as confirmation, and a lot of tests don't work anymore. And for robots, neural signatures don't help at all. Dehaene has no doubt that we can eventually build robots which are conscious in exactly the same way as humans are, but even if true, that doesn't tell us whether my laptop, GPT-3, or R2-D2 are conscious.

But do we have to rely on neural signatures? Can't we generalize and define it by the all-parts-talk-to-each-other-and-there-is-great-coherence-and-Granger-causality-thingy? So if a robot satisfies this criteria, is it/s/he conscious? The book only touches this question lightly, but I'll add a few of my own thoughts. Since the question about robots can become confusing, let me instead discuss the obvious alternative question if and when the Swiss canton of Glarus is conscious.

Switzerland is a successful little country in Europe, and it may have the weirdest political system that you have ever heard of. After each election, the government is formed from all parties in the parliament, more or less proportional to the number of seats. (It has only seven members, so small parties are left out.) Currently, the government consists of two members from the social democrats, two from the liberal party, one from the moderate rights, and two from the far right party.

Switzerland is an egalitarian society. It doesn't have a capital city because the Swiss didn't want to single out a city. Bern is the seat of government, close enough. Switzerland also doesn't have a head of state like the Queen, which is slightly awkward since the country can't make official state visits. (But the Swiss prefer anyway not to interact too much with other countries. They pondered 50 years before joining the UNO in 2002.) Switzerland also doesn't have a head of government. The closest thing is the mostly empty title "Bundespräsident", which rotates annually among the members of government. The title always goes to the member who hasn't held it for the longest time. (There are tie-breaking rules in case several members of the government never had it.) But the Bundespräsident doesn't really have additional power.

For such an egalitarian country, it is not surprising that Switzerland is federal, it consists of 26 cantons (similar to US states, just smaller). Of course, every canton can make its own laws, and most cantons have parliaments for that. But the canton Glarus, with a tiny population of 12,000, does not have a parliament. Instead, they have a Landsgemeinde: once a year, the citizens of Glarus assemble at a big open space, and decide jointly on new laws, the tax rates for the next year, and important offices like judges. Every citizen can rise to speak on any of the discussed items, and can propose changes (which even go through sometimes).

I hope you see the analogy to consciousness. Once a year, the Landsgemeinde creates a coherent state among its citizens. Communication is open from everyone to everyone, until a common ground is found, and a decision is made which is compulsory for everyone. You could even say that the Landsgemeinde creates a permanent and consistent "memory" since its decisions become codified law.

So should we say that the canton of Glarus becomes conscious once a year? Probably... not. There are similarities, and after reading this review you might understand what I mean if I call the Landsgemeinde a conscious event of Glarus. But in any other context, I would just cause utter confusion. More importantly, it goes against the intuitive meaning of consciousness for 99% of the people. So if we want to describe the concept of "all-parts-communicate-and-are-coherent-and-Granger-causal", then we should better invent a new name for it. Actually, there have been attempts to formalize and measure this, most famously the Integrated Information Theory (IIT) by Giulio Tononi. But the hope that this could give a formal definition of consciousness has the same problem as the idea that the Landsgemeinde is conscious. In a great rebuttal, Scott Aaronson has discussed the idea that IIT captures consciousness, and concluded that it "is wrong — demonstrably wrong, for reasons that go to its core. [This] puts it in something like the top 2% of all mathematical theories of consciousness ever proposed. Almost all competing theories of consciousness, it seems to me, have been so vague, fluffy, and malleable that they can only aspire to wrongness."

So we have little hope to capture consciousness in a nice mathematical theory. But why would we even want this? One motivation comes from the idea that conscious beings are qualitatively different from others, and deserve human rights, or robot rights. But consciousness is a terrible indicator for that. There is no magic ingredient, no godly spark in consciousness. It’s nothing special. Or at least it is much more widespread among species than generally assumed, and it means a lot less than philosophers often make out of it. (To be fair, they might be talking about a different concept than “having conscious perceptions”. But I think Dehaene has the better claim on the term consciousness.) At least for me, this approach to ethics seems to be a dead end, and the question of consciousness of robots has become a lot more boring after reading this book. Robots won't be conscious in the exact same way as we are. They might be “conscious” in a fascinating different way, but that seems to become a matter of definition and taste, not a matter of insight. So we should not base our treatment of robots on the question whether they are conscious.

I would guess that the same problems will arise with other concepts like meta-cognition or self-consciousness, once we properly understand them. I don't have an alternative solution, but just a prediction: in the end, these discussions will not matter, because whether robots will be granted robot rights (assuming they don't just seize it) depends on whether and how people will subjectively perceive them as intelligent personalities. I doubt that any theory on this issue can win a political fight against the feelings of people.

It’s 2022, so of course enough people are worried about an epidemic of Monkeypox that it feels like a necessary public service to do a post about Monkeypox. I didn’t get to it especially fast exactly because I am not all that concerned, but I’m not entirely not concerned, so here we are.

Sarah Constantin has a basic facts post. Basic facts if nothing has changed:

- The smallpox vaccine is 85% effective at stopping spread, but it uses live virus and leaves a scar. It is not generally available but we have a strategic stockpile that is sufficient for the entire United States (like we should aim to do with all possible pandemic viruses, but congress is refusing).

- There are claims of both a 2.1% and a 3.6% case fatality rate from this version of monkeypox. Most deaths are in immunocompromised individuals. Can also be dangerous for pregnant women or children.

- Most cases historically come from animal-to-human transmission.

- Person to person transmission comes from prolonged social contact, sexual contact, living with the infected, saunas or contact with infected clothing or bedding.

- Most cases in the outbreak are men who have sex with men, but that’s not true of cases in Africa.

- Historical R0 estimate of 1.0.

- The WHO is treating this as a standard outbreak and following standard procedure. Link includes diagnostic criteria.

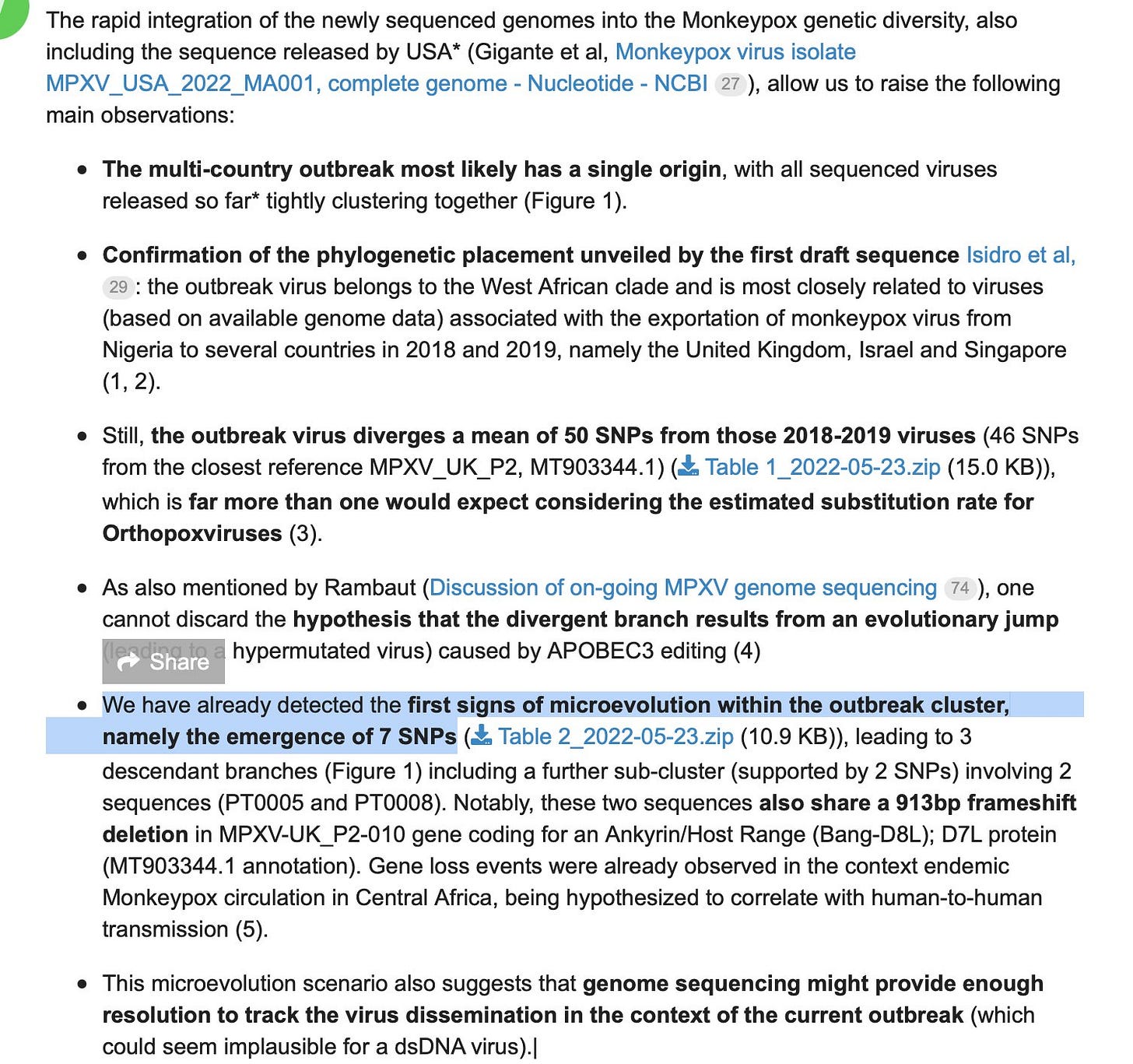

- Except that it looks like it has changed 50 SNPs, so maybe things changed.

This is a link to a spreadsheet of cases.

This thread also has a version of basic facts. This thread also covers the facts. This thread is excellent and has a bunch of facts. I go over that one later.

This thread contains links to 10 scientific papers, none of which I have read. I haven’t reached the ‘read all the papers’ level of dive yet, which tells you I’m not that worried.

Here is an OurWorldInData monkeypox data explorer. As of writing this cases are continuing to climb.

I have more hope that the WHO will respond reasonably to something like this, that has been around for a while, as opposed to Covid-19 which was novel. That doesn’t mean I’m counting on it.

I am not overly concerned, but I would describe the current situation as ‘concerning’ and would be much more concerned if no precautions were being taken or if people were generally acting as unconcerned as health officials are urging us to be.

The situation does not seem all that analogous to the situation with Covid. This is not a new virus. It is a virus that has been around for a long time. There is the concern that as our vaccination campaigns against smallpox fade into the past the population gets incrementally more vulnerable in a way that might require countermeasures, but it seems highly unlikely those countermeasures would need to be onerous or even include vaccination. The pattern of spread only makes sense if this is not that easy a virus to catch.

One thing that does make me worry is that the worst-case scenario, if things do get bad, is not that everyone gets vaccinated. That would be the worst-case scenario for a saner civilization. In ours, there is every reason to expect widespread resistance to such a campaign were it to prove necessary, and for this and other reasons a widespread reluctance to pull the trigger on the campaign. And of course, because of the insanity of our healthcare system, unless and until we’re ready for a major vaccination push it is unlikely that the shot will be generally available at all. So the more realistic worst-case scenarios are either that we end up having an epidemic without the vaccine because of these factors, or we have the vaccine but few people choose to take it.

The good news is I do not expect things to come to that unless the genetic changes are a big deal.

The bad news is there is some chance the changes are a big deal.

Don’t Panic

The public health authorities are saying ‘Don’t Panic’ in large friendly letters. As readers of the guide know this is good advice, but not if it crosses over into not reacting to potential problems. Even if everything is probably going to be fine, the expected value of the problem could still be in ‘buy very out of the money puts’ territory.

And when experts say monkeypox does not pose a serious risk to the public, and ‘caution against comparing it to Covid-19,’ how do you instinctively react to that? How do you update? After all that we have seen these past few years, those are not reassuring words.

Nor are these:

At least 17 infections of the rare disease have been confirmed in non-endemic areas such as the United States, United Kingdom, Portugal, Sweden and Italy, and dozens of possible cases are under investigation in those nations as well as in Canada and Spain.

If we don’t have a problem, why are cases showing up in this many different countries at once?

Once again, as with Covid-19, we have few cases so far but we also have confirmation of community transmission. And you know what really doesn’t make me feel better?

Health experts stress the risk to the public remains low and most people don’t need to be immediately fearful of contracting the illness.

What are the words ‘most’ and ‘immediately’ doing in that sentence? If they’re needed in that sentence, then it does seem like some amount of slight panicking might be in order. When something is on an exponential growth curve and you are told it isn’t an immediate problem that is not reassuring.

Also, where have we heard this before?

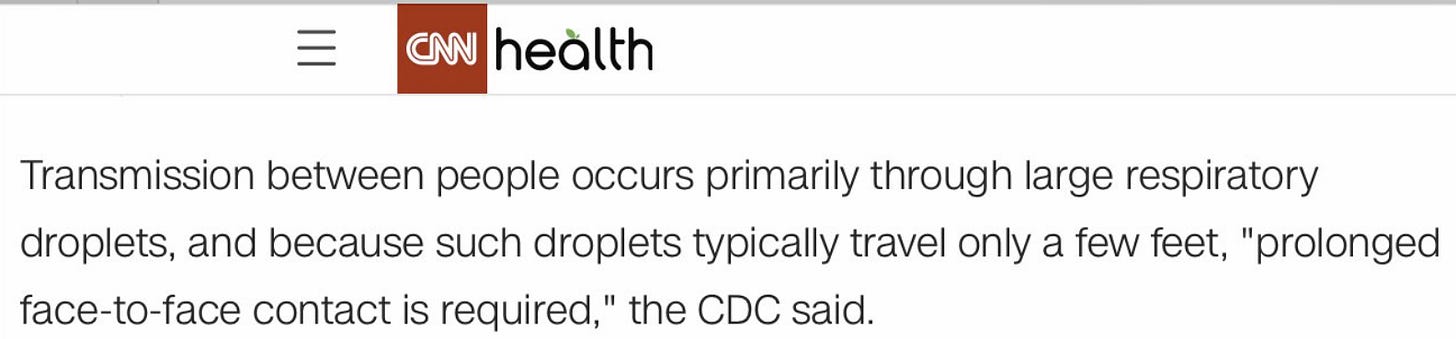

The disease can also spread from person-to-person via large respiratory droplets in the air, but they cannot travel more than a few feet so two people would need to have prolonged close contact.

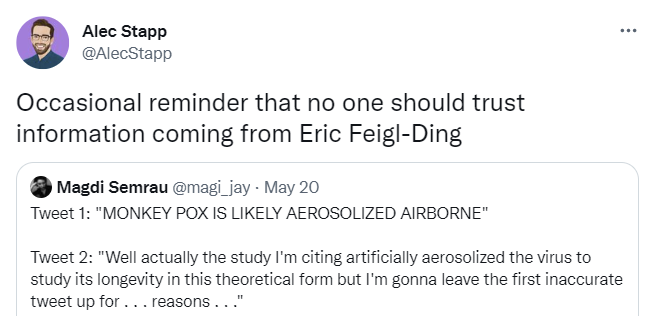

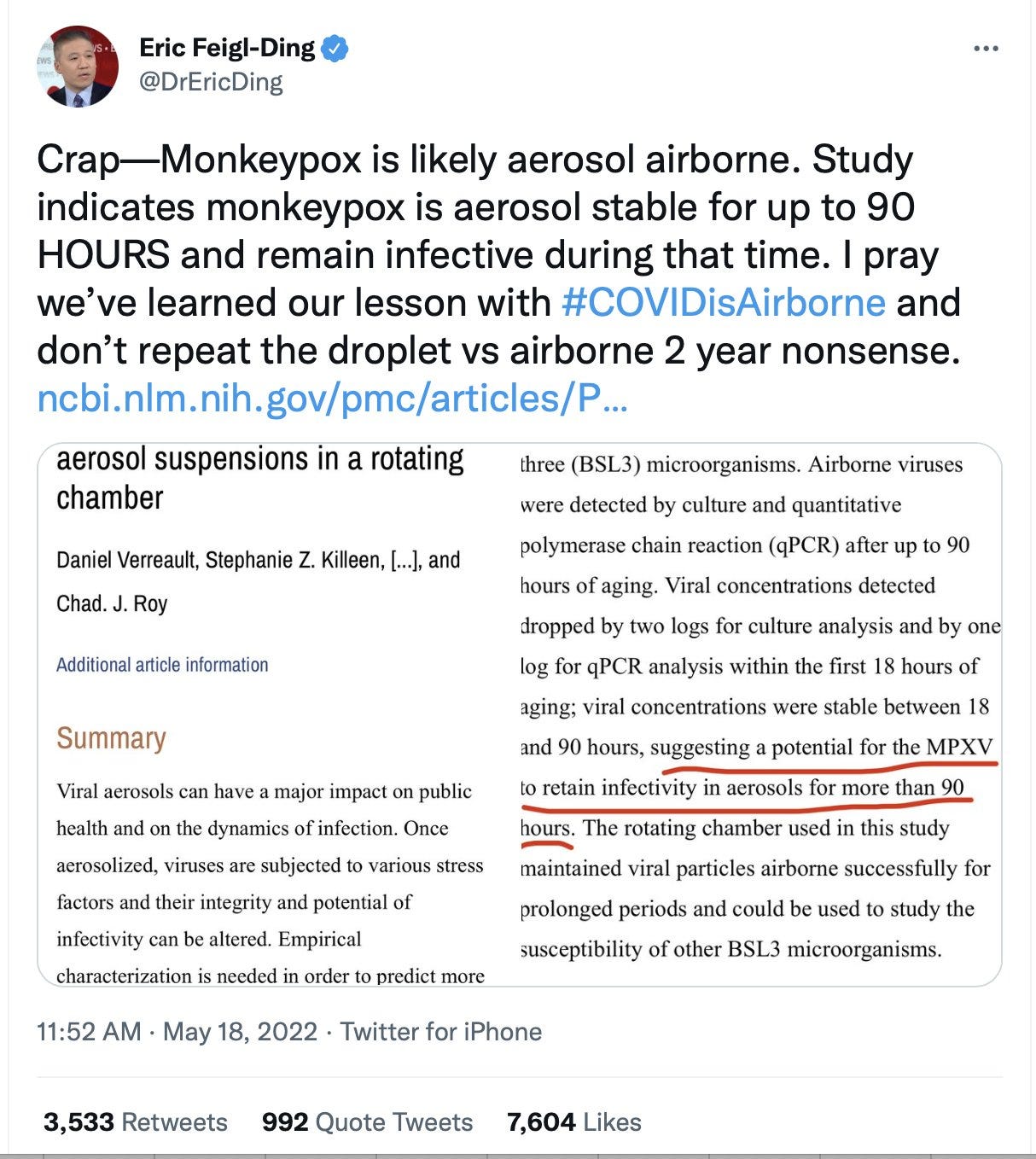

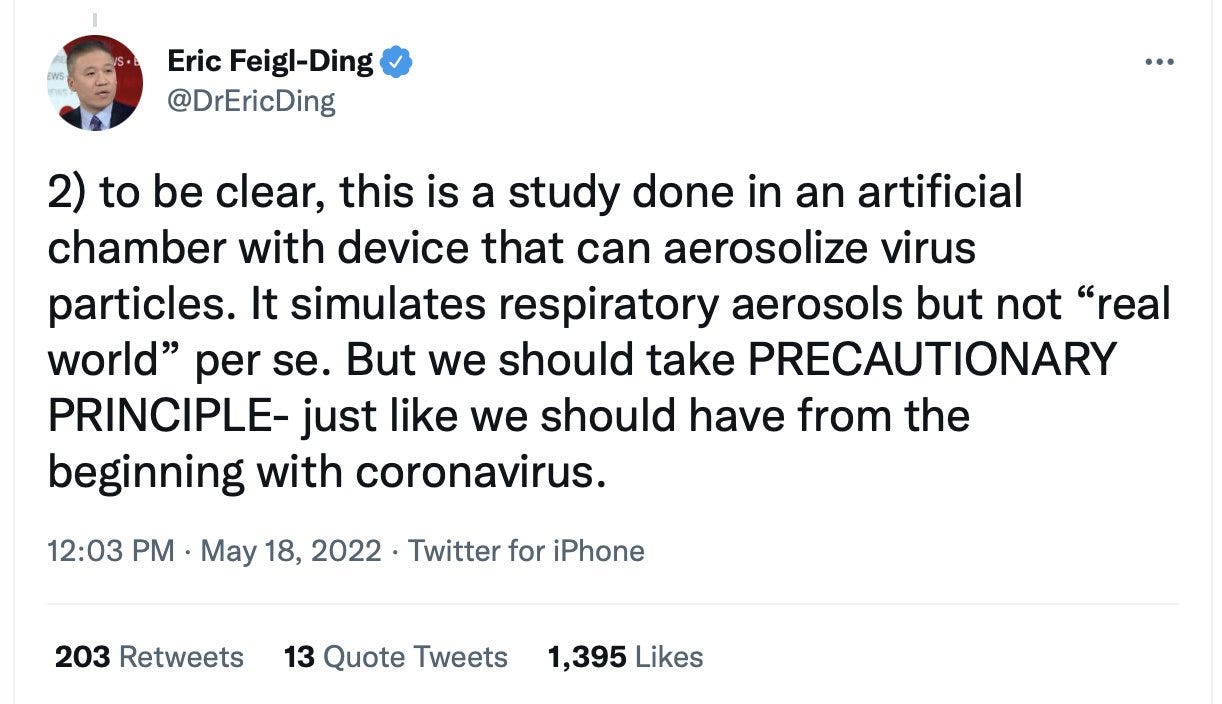

We even got a mistaken (or at least totally premature and completely irresponsible) claim that monkeypox is likely to be airborne, from a known unreliable source.

There are two things to say about this, other than the reminder not to listen to Eric Feigl-Ding.

One is that underneath it all he’s actually right about the precautionary principle here. This might be airborne (newly or otherwise) and it is damn well worth checking to be sure and until then not assuming that it isn’t.

The other is that there’s another easy-to-jump-to conclusion here, based on the fact that wait, we at least kind of did WHAT?

Gain of function research continues. Even assuming nothing has gone seriously wrong yet, it’s only a matter of time.

On the core question of whether monkeypox is safely not airborne, I’d say I do not actively disbelieve it, but it rhymes somewhat too much in too many ways to believe and not verify even if nothing has changed. If I had to guess how likely it is to be airborne I’d have to say at least ~5% in my current epistemic state. Which is kind of a lot.

She added this transmission route is different from that of COVID-19, which is spread through small aerosols that can hang in the air for several minutes.

“Aerosols are not subject to gravity but large droplets, they get pulled to the ground,” Doron said. “Also, monkeypox isn’t an illness that is transmitted during the asymptomatic phase, which is what made COVID such a formidable foe.”

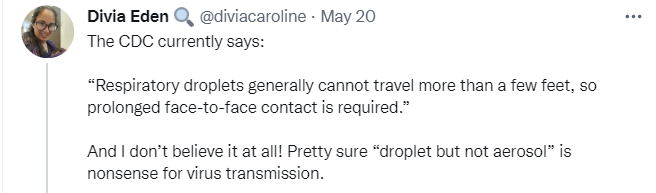

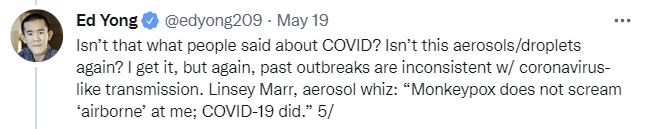

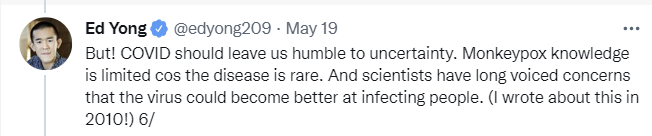

Early in Covid-19 we were told no asymptomatic transmission. We were told it wasn’t an aerosol. So, again, at best, need to verify – ‘expert’ claims don’t cut it anymore in 2022. I share most, but not all (I’m definitely not at ‘pretty sure’), of Divia’s skepticism here.

I applaud Nature for the careful wording here, emphasis mine. Post is a good overview.

Unlike SARS-CoV-2, which spreads through tiny air-borne droplets called aerosols, monkeypox is thought to spread from close contact with bodily fluids, such as saliva from coughing.

I also do put substantial weight on the ‘this doesn’t rhyme as much as you think it does’ arguments.

Also Nature brings up the possibility something changed beyond fading inoculations.

Answers to those questions could help researchers to determine whether the sudden uptick in cases stems from a mutation that allows monkeypox to transmit more readily than it did in the past, and whether each of the outbreaks traces back to a single origin, says Raina MacIntyre, an infectious-diseases epidemiologist at the University of New South Wales in Sydney, Australia.

Although I did chuckle a bit at this attempt to pretend obvious things aren’t obvious.

Another puzzle is why almost all of the case clusters include men aged 20–50, many of whom are men who have sex with men (MSM).

What is reassuring is monkeypox has been in a form that looks like this for a long time.

“It’s important to note this is not a new virus,” said Dr. John Brownstein, an epidemiologist at Boston Children’s Hospital and an ABC News contributor. “This has been around for a long while. It’s mostly endemic in parts of western Africa but you will occasionally see it in other parts of the world.”

People are typically infected by animals through a bite or a scratch or through preparation and consumption of contaminated bush meat.

The first case was observed in 1970 and in Africa it is considered endemic. If something can be long term endemic in a large region and neither get completely out of control there nor spread to other regions, then for it to suddenly break through quickly either it is a short term blip based that will fizzle out or something must have changed.

Also somewhat reassuring is this description which I trust a lot more than the claims on transmission.

Monkeypox generally is a mild illness with the most common symptoms being fever, headache, fatigue and muscle aches.

Patients can develop a rash and lesions that often begin on the face before spreading to the rest of the body.

“It starts out as spots, then small blisters like you’ll see with chickenpox, then pus-filled blisters and then they scab over,” Doron explained. “It’s a long illness. It lasts a few weeks, but you can be contagious for several weeks and contagious until the blisters scab over.”

A few weeks of this state, while being infectious, is definitely no fun and would suck quite a lot, but there are worse things. The death rate from the last recorded outbreak was 2.1%, which also sucks a lot, but four of the six deaths were in immunocompromised people with AIDS. And again there are worse things.

Also it’s possible we are dealing with a worse thing. And even within the cluster there have been some changes?

More variation than we should randomly expect in a given time is, in some sense, to be expected, but it is at least weak evidence that something substantial may have changed. Here is Scott Gottlieb speculating that it could be (slightly) more contagious.

Here is a link to actual sequencing from Portugal for those looking to deep dive. These guys are also helping with tracking sequencing.

Despite those changes, the protein coding sequence appears identical, which indeed argues against evolutionary adaptation, but isn’t definitive on its own.

This next part confused me – 9% seems like a pretty low attack rate?

The other evidence also suggests things have not substantially changed, since it is all consistent with what we knew before, but this is far from definitive.

Another problem is identification. Since monkeypox is so rare outside of Africa, it makes sense that it’s not high on anyone’s differential diagnosis. This links to the contact tracing guide.

Cevik’s thread ends with links to additional resources, some of which I’ve incorporated into this post.

This thread claims they ‘ran a simulation’ recently of a monkeypox outbreak and got a ton of deaths, which is worth what you think it is (and several tweets have been deleted) except that this is certainly a possible outcome. Again, precautionary principle.

Should We Vaccinate Now?

Vaccination for Covid-19 will often knock you on your ass for a day or two, but otherwise it is an exceptionally easy sell. Vaccination for monkeypox is not so simple. One can say ‘vaccinated everyone now’ but the vaccine contains live virus and leaves a visible scar.

That’s different from saying we should produceand verify the vaccine now. That is a much better proposal, and we are indeed ramping up production at least somewhat. We should absolutely be getting our defenses ready. What stocks we have should be checked to confirm they’re still good and will work on what we are seeing in the wild. If we don’t have enough vaccine for everyone we might need to vaccinate worldwide, which we don’t (we used to at least have enough for all Americans but don’t now) we should get production started. I can’t imagine having that around is a bad idea in any case, especially given the Russia situation.

Sarah recommends the following package, which seems right:

- Record suspected cases of monkeypox, test them with PCR and record their contacts.

- Order enough smallpox/monkeypox vaccines for the whole population if necessary.

- Order stockpiles of tecovirimat and brincidovofir, the only FDA and EMA approved antiviral drugs for monkeypox, cowpox, and smallpox.

- Approve and purchase PCR tests for monkeypox. (Currently I don’t see any commercial manufacturers, but some research institutes like the Institute for Tropical Medicine in Antwerp conduct PCR testing.)

I would add to that we should verify that the vaccine still has its full effectiveness given the changes we have seen. I still expect it to fully work, but this is not the kind of thing we leave to chance.

We are not at the stage where challenge trials make sense here in terms of the virus, but we are absolutely at the ‘vaccinate some people and check their response, and their antibodies against the virus in the lab’ phase.

Well, actually, we are totally at a place where actual challenge trials make sense if one can do the math and live in a sane civilization. It only takes a tiny chance of a big problem to be worth endangering a few people to better understand what you’re up against, those few people can be well-compensated in money and also honor and status and would happily volunteer and so on, all the usual arguments. Worth noting.

Kai reports an official assessment of ‘moderate risk’ for men who have multiple male sexual partners, low for everyone else, and an expectation of more cases before it gets better. I agree that if things start out this way chances are you’re not at or too near the peak yet, especially given we only learned of the problem within a week and the incubation period is longer than that. However, to say ‘moderate risk’ right now seems kind of absurd when you think about it. We’re talking worldwide about less than a thousand cases. The risk literally right now is not yet moderate, no matter who you are. It’s traditional to scare such communities way out of proportion to the actual risks involved, and the tradition is being continued. That doesn’t mean the risk is zero, and the risk will rise – it might well be moderate soon enough. But that seems premature as I write this.

For everyone else, I’d expect it to remain low unless, again, things have changed more than we expect. And by low we mean essentially none.

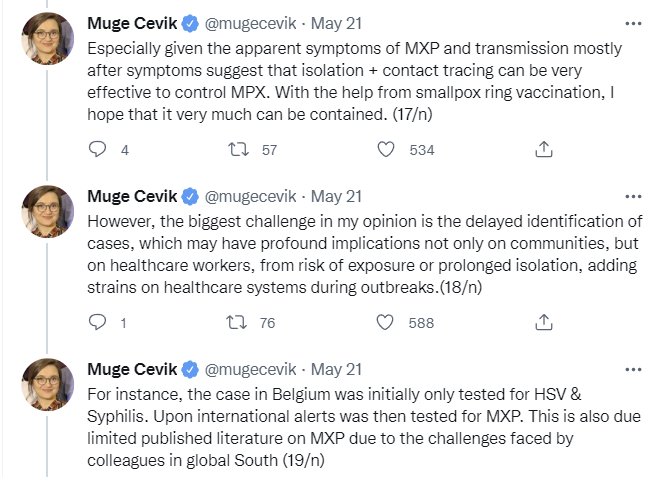

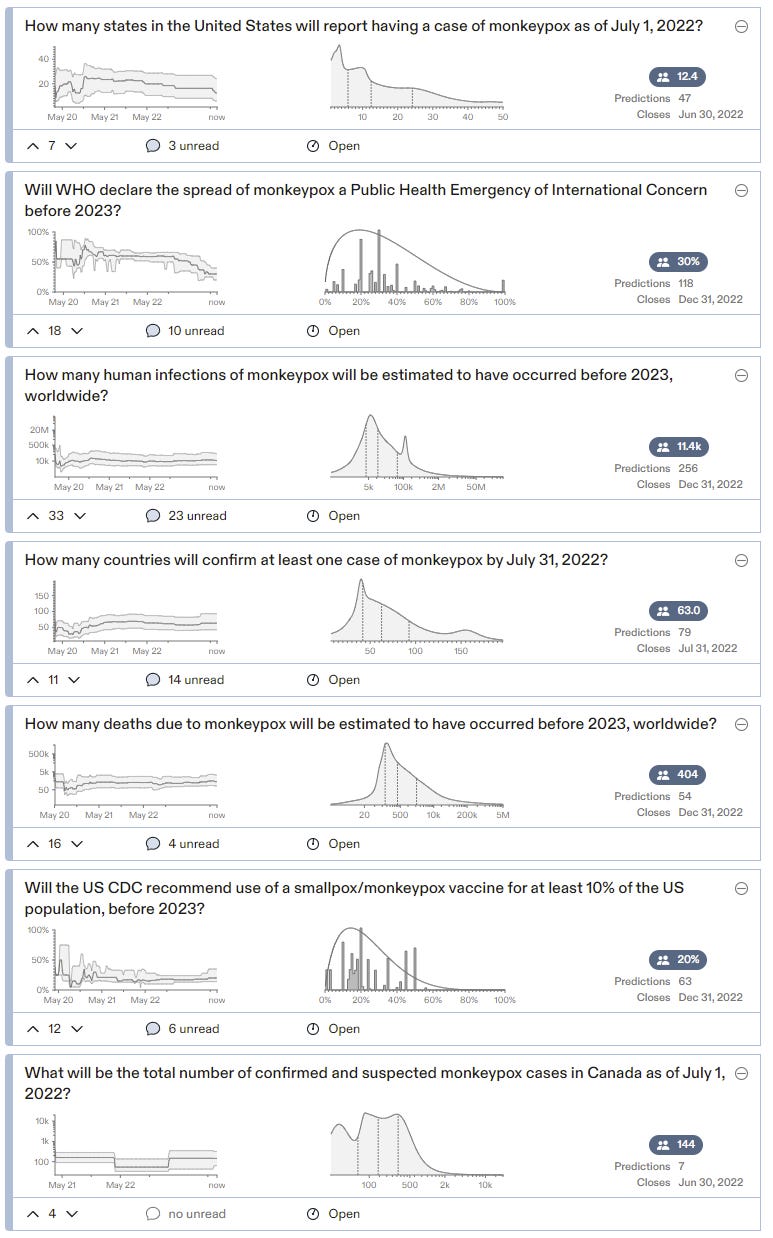

Metaculus

Now that we’ve covered the information sources it’s time to look at the prediction markets.

Once again Metaculus is giving us a solid baseline so that we can begin our thinking. They have ten markets.

This is a mix of some tangible short-term questions and attempts to represent the most important questions.

The estimate of R0 here is that it is likely to be well above 1. Yet predictions here do not anticipate either a mass vaccination campaign or a massive surge in cases.

That’s an odd combination.

If R0 is 1.48, given the current level of vaccination, something would have to prevent exponential growth. If it ends up being prior infections then these case expectations are way too low. If it ends up being vaccination, that’s predicted to only happen 20% of the time even for recommending it to 10% of the population. So this doesn’t seem like it’s telling a consistent story, unless the 1.48 refers to a specific narrow subpopulation, at which point it is plausible, and the subpopulation is small and could adjust its behavior, which would allow it all to make sense.

Something like that is my baseline scenario here. The vast majority of the time, almost all of us would have been better off if we had ignored the whole monkeypox thing entirely. But the only way for that to be true is to be in a world where a lot of people don’t ignore things in this reference class. So again, here we are.

My plan is totally not to worry about this again, and for this market to resolve to no.

Discuss

The day has finally come: NVIDIA IS PUBLISHING THEIR LINUX GPU KERNEL MODULES AS OPEN-SOURCE! To much excitement and a sign of the times, the embargo has just expired on this super-exciting milestone that many of us have been hoping to see for many years. Over the past two decades NVIDIA has offered great Linux driver support with their proprietary driver stack, but with the success of AMD’s open-source driver effort going on for more than a decade, many have been calling for NVIDIA to open up their drivers. Their user-space software is remaining closed-source but as of today they have formally opened up their Linux GPU kernel modules and will be maintaining it moving forward. Here’s the scoop on this landmark open-source decision at NVIDIA.

I can’t believe this is happening.

NVIDIA is open sourcing all of its kernel driver modules, for both enterprise stuff and desktop hardware, under both the GPL and MIT license, it will available on Github, and NVIDIA welcomes community contributions where they make sense. This isn’t just throwing the open source community a random bone – this looks and feels like the real deal. They’re even aiming to have their open source driver mainlined into the Linux kernel once API/ABI has stabalised.

This is a massive win for the open source community, and I am incredibly excited about what this will mean for the future of the Linux desktop.